How To Setup Docker with Detectron

Abstract: Being talked around docker with machine learning, I finally get the chance to learn and set it up. This post is about what is docker and how to use it.

Docker Logic

Docker in short words has two confusing items: image and container.

- An Image is your environoment snapshot, this if fixed unless you update the image every time. It is like a Class()

- A Container is the run time instance of an Image, just like a = Class(). It handles all run time configs, such as IP address or Port numbers.

Use docker for App deployment is easy, but for code development is HARD.

This is because any code changes go away after you delete the instance (call exit in a terminal). You canctrl-p ctrl-qto detach from a terminal but this won't make a big difference.

BTW, usedocker attach <container_name>to get back the environment

The way I found to make this work is you create a image that only mounts your code folders as an external drive path inside that image and then you run the instance of ths Image along with your envioronment containers.

Install Docker

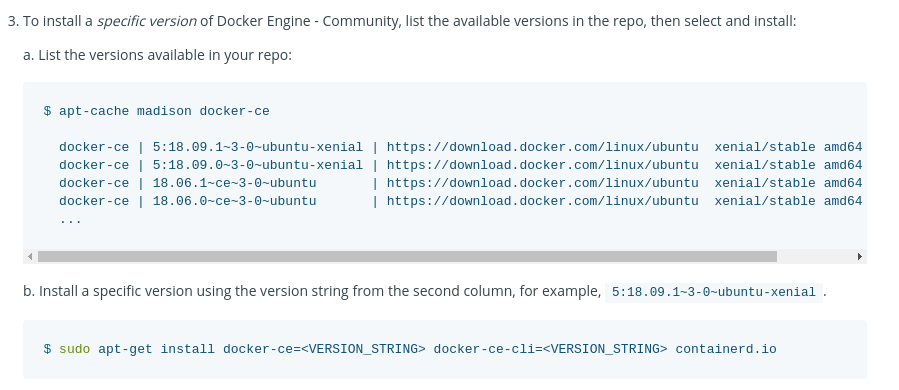

The official site is pretty good. Make sure you install the CE verison.

https://docs.docker.com/install/linux/docker-ce/ubuntu/

And do not over doing this:

Solve the Docker Permission

To reduce the time of adding 'sudo' to a docker command. Run this in your favourite shell and then completely log out of your account and log back in (or exit your SSH session and reconnect, if in doubt, reboot the computer you are trying to run docker on!):

sudo usermod -a -G docker $USER

Install PyTorch: The examples

In this case, I want to use Fackbook's Detectron as an example.

First thing first: I don't have a fully configed docker image at the end of this post, if I made one in the future, I will link it here.

Alerts

Also, if your GPU has a small ram, you won't be able to run the test at the end.

[E net_async_base.cc:377] [enforce fail at context_gpu.cu:415] error == cudaSuccess. 2 vs 0. Error at: /pytorch/caffe2/core/context_gpu.cu:415: out of memory

Detectron

This is the detectron:

https://github.com/facebookresearch/Detectron

The docker makefile it contains does not work on my machine so I found another tutorial.

What do you need:

- Docker CE version (click to see instructions)

- A PyTorch GPU-enabled docker container

- A Nvida GPU pass-through docker container

- A Peng's Code pass-through docker container

Once you get docker CE installed, go for PyTorch but you need to be careful about the version:

This version has Caffe2 bundled and Cuda9 (because Cuda10 breaks things)

sudo docker pull pytorch/pytorch:nightly-devel-cuda9.2-cudnn7

Once you get the pytorch, go to here and install the Nvida GPU pass-through

https://github.com/NVIDIA/nvidia-docker

After these you can create the environment container by:

sudo nvidia-docker run --name mytorch --rm -it pytorch/pytorch:nightly-devel-cuda9.2-cudnn7

.

Install Verification

We can then verify PyTorch is correctly installed and works fine with the GPU.

python -c "import torch;print(torch.cuda.get_device_name(0))"

To verify Caffe2 is installed correctly:

pip install protobuf

pip install future

python -c "from caffe2.python import workspace; print(workspace.NumCudaDevices())"

.

Install Detectron

There is really no detectron installation, you just clone it. But you want to clone it to the code container otherwise this will be gone after you exits the container. (code container will come in future)

git clone https://github.com/facebookresearch/Detectron

pip install -r ./Detectron/requirements.txt

cd Detectron && make

Testing:

python ./detectron/tests/test_spatial_narrow_as_op.py

Fix the missing dependency and verify OpenCV is fine.

credit goes to : https://www.kaggle.com/c/inclusive-images-challenge/discussion/70226

**You need to type lower case 'y' at the end, not 'Y' as asked"

apt-get update

apt-get install libgtk2.0-dev

python -c "import cv2; print(cv2.__version__)"

Install this testing helper

git clone https://github.com/cocodataset/cocoapi.git

cd cocoapi/PythonAPI

make install

.

Test Detectron with online database

This database is 500M, maybe download it first, manually.

Make sure you are at /Detectron/ folder

python tools/infer_simple.py --cfg configs/12_2017_baselines/e2e_mask_rcnn_R-101-FPN_2x.yaml --output-dir /tmp/detectron-visualizations --image-ext jpg --wts https://dl.fbaipublicfiles.com/detectron/35861858/12_2017_baselines/e2e_mask_rcnn_R-101-FPN_2x.yaml.02_32_51.SgT4y1cO/output/train/coco_2014_train:coco_2014_valminusminival/generalized_rcnn/model_final.pkl demo